This guide shows you how to deploy and configure a performance-optimized Red Hat Enterprise Linux (RHEL) high-availability (HA) cluster for an SAP HANA scale-up system on Google Cloud.

This guide uses the Terraform configuration provided by Google Cloud to deploy the Google Cloud resources for running the SAP HANA scale-up HA system such that the system satisfies SAP supportability requirements and conforms to best practices.

This guide is intended for advanced SAP HANA users who are familiar with Linux HA configurations for SAP HANA.

The system that this guide deploys

By following this guide, you deploy an SAP HANA scale-up system in an HA cluster on RHEL on Google Cloud that includes the following resources:

- A primary and secondary site, each containing an instance of SAP HANA, hosted on a Compute Engine instance. These sites are deployed in different zones, but in the same region.

- SAP-certified SSD-based Persistent Disk or Hyperdisk volumes for the SAP HANA data and log volumes, which are attached to the compute instances.

- Synchronous replication between the primary and secondary sites.

- A Pacemaker-managed HA cluster that includes the following:

- The RHEL high-availability setup.

- A fencing mechanism. You can choose to implement SBD-based fencing in the cluster.

- A virtual IP (VIP) that uses a Layer 4 TCP internal load balancer

implementation that includes the following:

- An internal passthrough Network Load Balancer to reroute traffic in the HA cluster in the event of a failure.

- A reservation of the IP address that you select for the VIP.

- Two Compute Engine instance groups.

- A Compute Engine health check.

This guide does not include information about deploying SAP NetWeaver.

Architecture diagram

The following tabs show the architecture diagram of the system that this guide helps you deploy:

For fence_gce based fencing

The following architecture diagram shows the resources deployed in an SAP HANA

scale-up HA system when you opt to use the fence_gce agent based fencing

mechanism:

For SBD based fencing

The following architecture diagram shows the resources deployed in an SAP HANA scale-up HA system when you opt to use SBD-based fencing:

Before you begin

Before you deploy the SAP HANA scale-up HA system on RHEL, make sure that the following prerequisites are met:

- You have read the SAP HANA planning guide and the SAP HANA high-availability planning guide.

- You or your organization has a Google Cloud account and have created a Google Cloud project for the SAP HANA deployment. For information about how to create Google Cloud accounts and projects, see Set up your Google account.

If you require your SAP workload to run in compliance with data residency, access control, support personnel, or regulatory requirements, then you must create the required Assured Workloads folder. For more information, see Compliance and sovereign controls for SAP on Google Cloud.

If OS login is enabled in your project metadata, you need to disable OS login temporarily until your deployment is complete. For deployment purposes, this procedure configures SSH keys in instance metadata. When OS login is enabled, metadata-based SSH key configurations are disabled, and this deployment fails. After deployment is complete, you can enable OS login again.

For more information, see:

If you are using VPC internal DNS, then the value of the

vmDnsSettingvariable in your project metadata must either beGlobalOnlyorZonalPreferredto enable the resolution of the node names across zones. The default setting ofvmDnsSettingisZonalOnly. For more information, see:

Setting up your Google account

A Google account is required to work with Google Cloud.

- Sign up for a Google account if you don't already have one.

- (Optional) If you require your SAP workload to run in compliance with data residency, access control, support personnel, or regulatory requirements, then you must create the required Assured Workloads folder. For more information, see Compliance and sovereign controls for SAP on Google Cloud.

- Log in to the Google Cloud console, and create a new project.

- Enable your billing account.

- Configure SSH keys so that you are able to use them to SSH into your Compute Engine VM instances. Use the Google Cloud CLI to create a new SSH key.

- Use the gcloud CLI or Google Cloud console to add the SSH keys to your project metadata. This allows you to access any Compute Engine VM instance created within this project, except for instances that explicitly disable project-wide SSH keys.

Creating a network

For security purposes, create a new network. You can control who has access by adding firewall rules or by using another access control method.

If your project has a default VPC network, then don't use it. Instead, create your own VPC network so that the only firewall rules in effect are those that you create explicitly.

During deployment, Compute Engine instances typically require access to the internet to download Google Cloud's Agent for SAP. If you are using one of the SAP-certified Linux images that are available from Google Cloud, then the compute instance also requires access to the internet in order to register the license and to access OS vendor repositories. A configuration with a NAT gateway and with VM network tags supports this access, even if the target compute instances don't have external IPs.

To set up networking:

Console

- In the Google Cloud console, go to the VPC networks page.

- Click Create VPC network.

- Enter a Name for the network.

The name must adhere to the naming convention. VPC networks use the Compute Engine naming convention.

- For Subnet creation mode, choose Custom.

- In the New subnet section, specify the following configuration parameters for a

subnet:

- Enter a Name for the subnet.

- For Region, select the Compute Engine region where you want to create the subnet.

- For IP stack type, select IPv4 (single-stack) and then enter an IP

address range in the

CIDR format,

such as

10.1.0.0/24.This is the primary IPv4 range for the subnet. If you plan to add more than one subnet, then assign non-overlapping CIDR IP ranges for each subnetwork in the network. Note that each subnetwork and its internal IP ranges are mapped to a single region.

- Click Done.

- To add more subnets, click Add subnet and repeat the preceding steps. You can add more subnets to the network after you have created the network.

- Click Create.

gcloud

- Go to Cloud Shell.

- To create a new network in the custom subnetworks mode, run:

gcloud compute networks create NETWORK_NAME --subnet-mode custom

Replace

NETWORK_NAMEwith the name of the new network. The name must adhere to the naming convention. VPC networks use the Compute Engine naming convention.Specify

--subnet-mode customto avoid using the default auto mode, which automatically creates a subnet in each Compute Engine region. For more information, see Subnet creation mode. - Create a subnetwork, and specify the region and IP range:

gcloud compute networks subnets create SUBNETWORK_NAME \ --network NETWORK_NAME --region REGION --range RANGEReplace the following:

SUBNETWORK_NAME: the name of the new subnetworkNETWORK_NAME: the name of the network you created in the previous stepREGION: the region where you want the subnetworkRANGE: the IP address range, specified in CIDR format, such as10.1.0.0/24If you plan to add more than one subnetwork, assign non-overlapping CIDR IP ranges for each subnetwork in the network. Note that each subnetwork and its internal IP ranges are mapped to a single region.

- Optionally, repeat the previous step and add additional subnetworks.

Setting up a NAT gateway

If you need to create one or more VMs without public IP addresses, then you need to use network address translation (NAT) to enable the VMs to access the internet. Use Cloud NAT, a Google Cloud distributed, software-defined managed service that lets VMs send outbound packets to the internet and receive any corresponding established inbound response packets. Alternatively, you can set up a separate VM as a NAT gateway.

To create a Cloud NAT instance for your project, see Using Cloud NAT.

After you configure Cloud NAT for your project, your VM instances can securely access the internet without a public IP address.

Adding firewall rules

By default, an implied firewall rule blocks incoming connections from outside your Virtual Private Cloud (VPC) network. To allow incoming connections, set up a firewall rule for your VM. After an incoming connection is established with a VM, traffic is permitted in both directions over that connection.

You can also create a firewall rule to allow external access to specified ports,

or to restrict access between VMs on the same network. If the default

VPC network type is used, some additional default rules also

apply, such as the default-allow-internal rule, which allows connectivity

between VMs on the same network on all ports.

Depending on the IT policy that is applicable to your environment, you might need to isolate or otherwise restrict connectivity to your database host, which you can do by creating firewall rules.

Depending on your scenario, you can create firewall rules to allow access for:

- The default SAP ports that are listed in TCP/IP of All SAP Products.

- Connections from your computer or your corporate network environment to your Compute Engine instance. If you are unsure of what IP address to use, talk to your company's network administrator.

- Communication between VMs in the SAP HANA subnetwork, including communication between nodes in an SAP HANA scale-out system or communication between the database server and application servers in a 3-tier architecture. You can enable communication between VMs by creating a firewall rule to allow traffic that originates from within the subnetwork.

To create a firewall rule, complete the following steps:

Console

In the Google Cloud console, go to the Compute Engine Firewall page.

At the top of the page, click Create firewall rule.

- In the Network field, select the network where your VM is located.

- In the Targets field, specify the resources on Google Cloud that this rule applies to. For example, specify All instances in the network. Or to to limit the rule to specific instances on Google Cloud, enter tags in Specified target tags.

- In the Source filter field, select one of the following:

- IP ranges to allow incoming traffic from specific IP addresses. Specify the range of IP addresses in the Source IP ranges field.

- Subnets to allow incoming traffic from a particular subnetwork. Specify the subnetwork name in the following Subnets field. You can use this option to allow access between the VMs in a 3-tier or scaleout configuration.

- In the Protocols and ports section, select Specified protocols and

ports and enter

tcp:PORT_NUMBER.

Click Create to create your firewall rule.

gcloud

Create a firewall rule by using the following command:

$ gcloud compute firewall-rules create firewall-name

--direction=INGRESS --priority=1000 \

--network=network-name --action=ALLOW --rules=protocol:port \

--source-ranges ip-range --target-tags=network-tagsDeploy resources for SAP HANA

To deploy the Google Cloud resources for running SAP HANA, such as compute instances, you use the Terraform configuration provided by Google Cloud.

The following instructions use the Cloud Shell, but are generally applicable to the Google Cloud CLI.

To deploy the Google Cloud resources for running SAP HANA, complete the following steps:

Verify that your current quotas for resources such as Compute Engine instances, Persistent Disk volumes, Hyperdisk volumes, and CPUs are sufficient for the SAP HANA systems that you are about to install. If your quotas are insufficient, then your deployment fails.

For information about quota requirements to run SAP HANA, see Pricing and quota considerations for SAP HANA.

Open the Cloud Shell or, if you installed the gcloud CLI on your local workstation, then open a terminal.

In your working directory, download the

manual_sap_hana_scaleup_ha.tfconfiguration file:wget https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana_ha/terraform/manual_sap_hana_scaleup_ha.tfEdit the

manual_sap_hana_scaleup_ha.tffile and update values for the arguments, as described in the following table.If you're using Cloud Shell, then you can edit this file by using the Cloud Shell code editor. To open the Cloud Shell code editor, click the pencil icon in the upper right corner of the Cloud Shell terminal window.

Argument Data type Description sourceString Specifies the location and version of the Terraform module to use during deployment.

The

manual_sap_hana_scaleup_ha.tfconfiguration file includes two instances of thesourceargument: one that is active and one that is included as a comment. Thesourceargument that is active by default specifieslatestas the module version. The second instance of thesourceargument, which by default is deactivated by a leading#character, specifies a timestamp that identifies a module version.If you need all of your deployments to use the same module version, then remove the leading

#character from thesourceargument that specifies the version timestamp and add it to thesourceargument that specifieslatest.project_idString Specify the ID of your Google Cloud project in which you are deploying this system. For example, my-project-x.instance_nameString Specify a name for the host VM instance. The name can contain lowercase letters, numbers, and hyphens. The VM instances for the worker and standby hosts use the same name with a wand the host number appended to the name.machine_typeString Specify the type of Compute Engine virtual machine (VM) on which you need to run your SAP system. If you need a custom VM type, then specify a predefined VM type with a number of vCPUs that is closest to the number you need while still being larger. After deployment is complete, modify the number of vCPUs and the amount of memory. For example,

n1-highmem-32.zoneString Specify the zone in which you are deploying your SAP system. The zone must be in the same region that you selected for your subnet.

For example, if your subnet is deployed in the

us-central1region, then you can specify a zone such asus-central1-a.sole_tenant_deploymentBoolean Optional. If you want to provision a sole-tenant node for your SAP HANA deployment, then specify the value

true.The default value is

false.This argument is available in

manual_sap_hana_scaleup_haversion1.6.859618681or later.sole_tenant_name_prefixString Optional. If you're provisioning a sole-tenant node for your SAP HANA deployment, then you can use this argument to specify a prefix that Terraform sets for the names of the corresponding sole tenant template and sole tenant group.

The default value is

st-SID_LC.For information about sole tenant template and sole tenant group, see Sole tenancy overview.

This argument is available in

manual_sap_hana_scaleup_haversion1.6.859618681or later.sole_tenant_node_typeString Optional. If you want to provision a sole-tenant node for your SAP HANA deployment, then specify the node type that you want to set for the corresponding node template.

This argument is available in

manual_sap_hana_scaleup_haversion1.6.859618681or later.subnetworkString Specify the name of the subnetwork that you created in a previous step. If you are deploying to a shared VPC, then specify this value as SHARED_VPC_PROJECT_ID/SUBNETWORK. For example,myproject/network1.linux_imageString Specify the name of the Linux operating system image on which you want to deploy your SAP system. For example, rhel-9-2-sap-ha. For the list of available operating system images, see the Images page in the Google Cloud console.linux_image_projectString Specify the Google Cloud project that contains the image that you have specified for the argument linux_image. This project might be your own project or a Google Cloud image project. For a Compute Engine image, specifyrhel-sap-cloud. To find the image project for your operating system, see Operating system details.sap_hana_sidString To automatically install SAP HANA on the deployed VMs, specify the SAP HANA system ID. The ID must consist of three alpha-numeric characters and begin with a letter. All letters must be in uppercase. For example, ED1.sap_hana_backup_sizeInteger Optional. Specify size of the /hanabackupvolume in GB. If you don't specify this argument or set it to0, then the installation script provisions Compute Engine instance with a HANA backup volume of two times the total memory.include_backup_diskBoolean Optional. The default value is true, which directs Terraform to deploy a separate disk to host the/hanabackupdirectory.The disk type is determined by the

backup_disk_typeargument. The size of this disk is determined by thesap_hana_backup_sizeargument.If you set the value for

include_backup_diskasfalse, then no disk is deployed for the/hanabackupdirectory.You can use only one backup option in your Terraform configuration - either disk based backup or Backint based backup. For more information, see Backup management.

backup_disk_typeString Optional. For scale-up deployments, specify the type of Persistent Disk or Hyperdisk that you want to deploy for the /hanabackupvolume. For information about the default disk deployment performed by the Terraform configurations provided by Google Cloud, see Disk deployment by Terraform.The following are the valid values for this argument:

pd-ssd,pd-balanced,pd-standard,hyperdisk-extreme,hyperdisk-balanced, andpd-extreme.This argument is available in

manual_sap_hana_scaleup_hamodule version1.6.859618681or later.sap_hana_sapsys_gidInteger Optional. Overrides the default group ID for sapsys. The default value is79.disk_typeString Optional. Specify the default type of Persistent Disk or Hyperdisk volume that you want to deploy for the SAP data and log volumes in your deployment. For information about the default disk deployment performed by the Terraform configurations provided by Google Cloud, see Disk deployment by Terraform. The following are valid values for this argument:

pd-ssd,pd-balanced,hyperdisk-extreme,hyperdisk-balanced, andpd-extreme. In SAP HANA scale-up deployments, a separate Balanced Persistent Disk is also deployed for the/hana/shareddirectory.You can override this default disk type and the associated default disk size and default IOPS using some advanced arguments. For more information, navigate to your working directory, then run the

terraform initcommand, and then see the/.terraform/modules/manual_sap_hana_scaleup_ha/variables.tffile. Before you use these arguments in production, make sure to test them in a non-production environment.If you want to use SAP HANA Native Storage Extension (NSE), then you need to provision larger disks by using the advanced arguments.

use_single_shared_data_log_diskBoolean Optional. The default value is false, which directs Terraform to deploy a separate persistent disk or Hyperdisk for each of the following SAP volumes:/hana/data,/hana/log,/hana/shared, and/usr/sap. To mount these SAP volumes on the same persistent disk or Hyperdisk, specifytrue.network_tagsString Optional. Specify one or more comma-separated network tags that you want to associate with your VM instances for firewall or routing purposes. If you specify

public_ip = falseand do not specify a network tag, then make sure to provide another means of access to the internet.nic_typeString Optional. Specify the network interface to use with the VM instance. You can specify the value GVNICorVIRTIO_NET. To use a Google Virtual NIC (gVNIC), you need to specify an OS image that supports gVNIC as the value for thelinux_imageargument. For the OS image list, see Operating system details.If you do not specify a value for this argument, then the network interface is automatically selected based on the machine type that you specify for the

This argument is available inmachine_typeargument.sap_hanamodule version202302060649or later.public_ipBoolean Optional. Determines whether or not a public IP address is added to your VM instance. The default value is true.service_accountString Optional. Specify the email address of a user-managed service account to be used by the host VMs and by the programs that run on the host VMs. For example, svc-acct-name@project-id.iam.gserviceaccount.com.If you specify this argument without a value, or omit it, then the installation script uses the Compute Engine default service account. For more information, see Identity and access management for SAP programs on Google Cloud.

reservation_nameString Optional. To use a specific Compute Engine VM reservation for this deployment, specify the name of the reservation. By default, the installation script selects any available Compute Engine reservation based on the following conditions. For a reservation to be usable, regardless of whether you specify a name or the installation script selects it automatically, the reservation must be set with the following:

-

The

specificReservationRequiredoption is set totrueor, in the Google Cloud console, the Select specific reservation option is selected. -

Some Compute Engine machine types support CPU platforms that are not

covered by the SAP certification of the machine type. If the target

reservation is for any of the following machine types, then the reservation

must specify the minimum CPU platforms as indicated:

n1-highmem-32: Intel Broadwelln1-highmem-64: Intel Broadwelln1-highmem-96: Intel Skylakem1-megamem-96: Intel Skylake

The minimum CPU platforms for all of the other machine types that are

certified by SAP for use on Google Cloud conform to the SAP minimum CPU

requirement.

vm_static_ipString Optional. Specify a valid static IP address for the VM instance. If you don't specify one, then an IP address is automatically generated for your VM instance. This argument is available in

sap_hana_scaleoutmodule version202306120959or later.The following example is a completed Terraform configuration to deploy a Pacemaker-managed SAP HANA scale-up HA system on RHEL on Google Cloud:

For

fence_gcebased fencing# ... module "sap_hana_primary" { source = "https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana/sap_hana_module.zip" # # By default, this source file uses the latest release of the terraform module # for SAP on Google Cloud. To fix your deployments to a specific release # of the module, comment out the source argument above and uncomment the source argument below. # # source = "https://storage.googleapis.com/cloudsapdeploy/terraform/YYYYMMDDHHMM/terraform/sap_hana/sap_hana_module.zip" # # ... # project_id = "example-project-123456" zone = "us-central1-a" machine_type = "n2-highmem-32" subnetwork = "example-subnet-us-central1" linux_image = "rhel-9-6-sap-ha" linux_image_project = "rhel-sap-cloud" instance_name = "example-ha-vm1" sap_hana_sid = "AB1" # ... network_tags = [ "hana-ha-ntwk-tag" ] service_account = "sap-deploy-example@example-project-123456.iam.gserviceaccount.com" vm_static_ip = "10.0.0.1" # ... } # ... module "sap_hana_secondary" { source = "https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana/sap_hana_module.zip" # # By default, this source file uses the latest release of the terraform module # for SAP on Google Cloud. To fix your deployments to a specific release # of the module, comment out the source argument above and uncomment the source argument below. # # source = "https://storage.googleapis.com/cloudsapdeploy/terraform/YYYYMMDDHHMM/terraform/sap_hana/sap_hana_module.zip" # # ... # project_id = "example-project-123456" zone = "us-central1-b" machine_type = "n2-highmem-32" subnetwork = "example-subnet-us-central1" linux_image = "rhel-9-6-sap-ha" linux_image_project = "rhel-sap-cloud" instance_name = "example-ha-vm2" sap_hana_sid = "AB1" # ... network_tags = [ "hana-ha-ntwk-tag" ] service_account = "sap-deploy-example@example-project-123456.iam.gserviceaccount.com" vm_static_ip = "10.0.0.2" # ... }For SBD based fencing

# ... module "sap_hana_primary" { source = "https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana/sap_hana_module.zip" # # By default, this source file uses the latest release of the terraform module # for SAP on Google Cloud. To fix your deployments to a specific release # of the module, comment out the source argument above and uncomment the source argument below. # # source = "https://storage.googleapis.com/cloudsapdeploy/terraform/YYYYMMDDHHMM/terraform/sap_hana/sap_hana_module.zip" # # ... # project_id = "example-project-123456" zone = "us-central1-a" machine_type = "n2-highmem-32" subnetwork = "example-subnet-us-central1" linux_image = "rhel-9-6-sap-ha" linux_image_project = "rhel-sap-cloud" instance_name = "example-ha-vm1" sap_hana_sid = "AB1" # ... network_tags = [ "hana-ha-ntwk-tag" ] service_account = "sap-deploy-example@example-project-123456.iam.gserviceaccount.com" vm_static_ip = "10.0.0.1" # ... } # ... module "sap_hana_secondary" { source = "https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana/sap_hana_module.zip" # # By default, this source file uses the latest release of the terraform module # for SAP on Google Cloud. To fix your deployments to a specific release # of the module, comment out the source argument above and uncomment the source argument below. # # source = "https://storage.googleapis.com/cloudsapdeploy/terraform/YYYYMMDDHHMM/terraform/sap_hana/sap_hana_module.zip" # # ... # project_id = "example-project-123456" zone = "us-central1-b" machine_type = "n2-highmem-32" subnetwork = "example-subnet-us-central1" linux_image = "rhel-9-6-sap-ha" linux_image_project = "rhel-sap-cloud" instance_name = "example-ha-vm2" sap_hana_sid = "AB1" # ... network_tags = [ "hana-ha-ntwk-tag" ] service_account = "sap-deploy-example@example-project-123456.iam.gserviceaccount.com" vm_static_ip = "10.0.0.2" # ... } resource "google_compute_instance" "iscsi_target_instance" { name = "iscsi_target_instance" project = "example-project-123456" machine_type = "n1-highmem-32" zone = "us-central1-c" boot_disk { initialize_params { image = "rhel-9-6-sap-ha" } } network_interface { network = example-network-us-central1" } service_account { email = "sv-account-example@example-project-123456.iam.gserviceaccount.com" # Do not edit the following line scopes = [ "cloud-platform" ] } # Do not edit the following line metadata_startup_script = "curl -s https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana_ha/manual_instance_startup.sh | bash -s https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/sap_hana_ha/manual_instance_startup.sh" }-

The

Initialize your current working directory and download the Terraform provider plugin and module files for Google Cloud:

terraform init

The

terraform initcommand prepares your working directory for other Terraform commands.To force a refresh of the provider plugin and configuration files in your working directory, specify the

--upgradeflag. If the--upgradeflag is omitted and you don't make any changes in your working directory, Terraform uses the locally cached copies, even iflatestis specified in thesourceURL.terraform init --upgrade

Optionally, create the Terraform execution plan:

terraform plan

The

terraform plancommand shows the changes required by your current configuration. If you skip this step, theterraform applycommand automatically creates a new plan and prompts you to approve it.Apply the Terraform execution plan:

terraform apply

When you are prompted to approve the actions, enter

yes.The

terraform applycommand sets up the Google Cloud resources according to the arguments that you define in the Terraform configuration file and then hands control over to a script that prepares the OS for the HA cluster.While Terraform has control, status messages are written to the Cloud Shell. After the scripts are invoked, status messages are written to Logging and are viewable in the Google Cloud console, as described in Check the logs.

Create firewall rules that allow access to the host VMs

If you haven't done so already, then create firewall rules that allow access to each host compute instance from the following sources:

- For configuration purposes, your local workstation, a bastion host, or a jump server

- For access between the cluster nodes, the other host VMs in the HA cluster

When you create VPC firewall rules, you specify the network tags that you defined in the Terraform configuration file to designate your host VMs as the target for the rule.

To verify deployment, define a rule to allow SSH connections on port 22 from

a bastion host or your local workstation.

For access between the cluster nodes, add a firewall rule that allows all connection types on any port from other compute instances in the same subnetwork.

Before proceeding to the next section, make sure to create the firewall rules for verifying deployment and for intra-cluster communication. For information about how to create firewall rules, see Adding firewall rules.

Verify the deployment of resources

To verify that your Terraform configuration file has successfully deployed the Google Cloud resources required for your SAP HANA scale-up HA system, you can perform the following:

Check the logs

In the Google Cloud console, open Cloud Logging to monitor installation progress and check for errors.

Filter the logs:

Logs Explorer

In the Logs Explorer page, go to the Query pane.

From the Resource drop-down menu, select Global, and then click Add.

If you don't see the Global option, then in the query editor, enter the following query:

resource.type="global" "Deployment"Click Run query.

Legacy Logs Viewer

- In the Legacy Logs Viewer page, from the basic selector menu, select Global as your logging resource.

Analyze the filtered logs:

- If

"--- Finished"is displayed, then the deployment processing is complete and you can proceed to the next step. If you see a quota error:

On the IAM & Admin Quotas page, increase any of your quotas that do not meet the SAP HANA requirements that are listed in the SAP HANA planning guide.

On the Deployment Manager Deployments page, delete the deployment to clean up the VMs and persistent disks from the failed installation.

Rerun your deployment.

- If

Check the configuration of the compute instances

Connect to each compute instance by using SSH. From the Compute Engine VM instances page you can click the SSH button for each instance, or you can use your preferred SSH method.

Switch to the root user:

sudo su -

At the command prompt, enter

df -h. Ensure that you see output that includes the/hanadirectories, such as/hana/data.example-ha-vm1:~ # df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 126G 8.0K 126G 1% /dev tmpfs 189G 54M 189G 1% /dev/shm tmpfs 126G 34M 126G 1% /run tmpfs 126G 0 126G 0% /sys/fs/cgroup /dev/sda3 30G 5.4G 25G 18% / /dev/sda2 20M 2.9M 18M 15% /boot/efi /dev/mapper/vg_hana-shared 251G 50G 202G 20% /hana/shared /dev/mapper/vg_hana-sap 32G 281M 32G 1% /usr/sap /dev/mapper/vg_hana-data 426G 8.0G 418G 2% /hana/data /dev/mapper/vg_hana-log 125G 4.3G 121G 4% /hana/log /dev/mapper/vg_hanabackup-backup 512G 6.4G 506G 2% /hanabackup tmpfs 26G 0 26G 0% /run/user/473 tmpfs 26G 0 26G 0% /run/user/900 tmpfs 26G 0 26G 0% /run/user/0 tmpfs 26G 0 26G 0% /run/user/1003

Install SAP HANA

To install SAP HANA on the primary and secondary sites of your scale-up HA system, refer to the SAP documentation specific to the version of SAP HANA that you want to use.

Verify the installation SAP HANA

To verify the installation of SAP HANA on the compute instances you've deployed to run it, complete the following steps:

Establish an SSH connection with a compute instance that you've deployed to run your SAP HANA system.

Switch to the

SID_LCadmuser:su - SID_LCadmVerify that the SAP HANA services such as

hdbnameserver,hdbindexserver, and others, are running on the compute instance:HDB infoIf you are using RHEL for SAP 9.0 or later, then make sure that the packages

chkconfigandcompat-openssl11are installed on the compute instances that run your SAP HANA workload.For more information from SAP, see SAP Note 3108316 - Red Hat Enterprise Linux 9.x: Installation and Configuration.

Validate your installation of Google Cloud's Agent for SAP

After you've deployed the Google Cloud resources and installed your SAP HANA system, you must validate that Google Cloud's Agent for SAP is functioning as expected.

Verify that Google Cloud's Agent for SAP is running

To verify that the agent is running, follow these steps:

Establish an SSH connection with your Compute Engine instance.

Run the following command:

systemctl status google-cloud-sap-agent

If the agent is functioning properly, then the output contains

active (running). For example:google-cloud-sap-agent.service - Google Cloud Agent for SAP Loaded: loaded (/usr/lib/systemd/system/google-cloud-sap-agent.service; enabled; vendor preset: disabled) Active: active (running) since Fri 2022-12-02 07:21:42 UTC; 4 days ago Main PID: 1337673 (google-cloud-sa) Tasks: 9 (limit: 100427) Memory: 22.4 M (max: 1.0G limit: 1.0G) CGroup: /system.slice/google-cloud-sap-agent.service └─1337673 /usr/bin/google-cloud-sap-agent

If the agent isn't running, then restart the agent.

Verify that SAP Host Agent is receiving metrics

To verify that the infrastructure metrics are collected by Google Cloud's Agent for SAP and sent correctly to the SAP Host Agent, follow these steps:

- In your SAP system, enter transaction

ST06. In the overview pane, check the availability and content of the following fields for the correct end-to-end setup of the SAP and Google monitoring infrastructure:

- Cloud Provider:

Google Cloud Platform - Enhanced Monitoring Access:

TRUE - Enhanced Monitoring Details:

ACTIVE

- Cloud Provider:

Disable SAP HANA autostart

For each SAP HANA instance in the cluster, make sure that SAP HANA autostart is disabled. For failovers, Pacemaker manages the starting and stopping of the SAP HANA instances in a cluster.

On each host as SID_LCadm, stop SAP HANA:

>HDB stopOn each host, open the SAP HANA profile by using an editor, such as vi:

vi /usr/sap/SID/SYS/profile/SID_HDBINST_NUM_HOST_NAME

Set the

Autostartproperty to0:Autostart=0

Save the profile.

On each host as SID_LCadm, start SAP HANA:

>HDB start

Enable SAP HANA Fast Restart

Google Cloud strongly recommends enabling SAP HANA Fast Restart for each instance of SAP HANA, especially for larger instances. SAP HANA Fast Restart reduces restart time in the event that SAP HANA terminates, but the operating system remains running.

As configured by the automation scripts that Google Cloud provides,

the operating system and kernel settings already support SAP HANA Fast Restart.

You need to define the tmpfs file system and configure SAP HANA.

To define the tmpfs file system and configure SAP HANA, you can follow

the manual steps or use the automation script that

Google Cloud provides to enable SAP HANA Fast Restart. For more

information, see:

For the complete authoritative instructions for SAP HANA Fast Restart, see the SAP HANA Fast Restart Option documentation.

Manual steps

Configure the tmpfs file system

After the host VMs and the base SAP HANA systems are successfully deployed,

you need to create and mount directories for the NUMA nodes in the tmpfs

file system.

Display the NUMA topology of your VM

Before you can map the required tmpfs file system, you need to know how

many NUMA nodes your VM has. To display the available NUMA nodes on

a Compute Engine VM, enter the following command:

lscpu | grep NUMA

For example, an m2-ultramem-208 VM type has four NUMA nodes,

numbered 0-3, as shown in the following example:

NUMA node(s): 4 NUMA node0 CPU(s): 0-25,104-129 NUMA node1 CPU(s): 26-51,130-155 NUMA node2 CPU(s): 52-77,156-181 NUMA node3 CPU(s): 78-103,182-207

Create the NUMA node directories

Create a directory for each NUMA node in your VM and set the permissions.

For example, for four NUMA nodes that are numbered 0-3:

mkdir -pv /hana/tmpfs{0..3}/SID

chown -R SID_LCadm:sapsys /hana/tmpfs*/SID

chmod 777 -R /hana/tmpfs*/SIDMount the NUMA node directories to tmpfs

Mount the tmpfs file system directories and specify

a NUMA node preference for each with mpol=prefer:

SID specify the SID with uppercase letters.

mount tmpfsSID0 -t tmpfs -o mpol=prefer:0 /hana/tmpfs0/SID mount tmpfsSID1 -t tmpfs -o mpol=prefer:1 /hana/tmpfs1/SID mount tmpfsSID2 -t tmpfs -o mpol=prefer:2 /hana/tmpfs2/SID mount tmpfsSID3 -t tmpfs -o mpol=prefer:3 /hana/tmpfs3/SID

Update /etc/fstab

To ensure that the mount points are available after an operating system

reboot, add entries into the file system table, /etc/fstab:

tmpfsSID0 /hana/tmpfs0/SID tmpfs rw,nofail,relatime,mpol=prefer:0 tmpfsSID1 /hana/tmpfs1/SID tmpfs rw,nofail,relatime,mpol=prefer:1 tmpfsSID1 /hana/tmpfs2/SID tmpfs rw,nofail,relatime,mpol=prefer:2 tmpfsSID1 /hana/tmpfs3/SID tmpfs rw,nofail,relatime,mpol=prefer:3

Optional: set limits on memory usage

The tmpfs file system can grow and shrink dynamically.

To limit the memory used by the tmpfs file system, you

can set a size limit for a NUMA node volume with the size option.

For example:

mount tmpfsSID0 -t tmpfs -o mpol=prefer:0,size=250G /hana/tmpfs0/SID

You can also limit overall tmpfs memory usage for all NUMA nodes for

a given SAP HANA instance and a given server node by setting the

persistent_memory_global_allocation_limit parameter in the [memorymanager]

section of the global.ini file.

SAP HANA configuration for Fast Restart

To configure SAP HANA for Fast Restart, update the global.ini file

and specify the tables to store in persistent memory.

Update the [persistence] section in the global.ini file

Configure the [persistence] section in the SAP HANA global.ini file

to reference the tmpfs locations. Separate each tmpfs location with

a semicolon:

[persistence] basepath_datavolumes = /hana/data basepath_logvolumes = /hana/log basepath_persistent_memory_volumes = /hana/tmpfs0/SID;/hana/tmpfs1/SID;/hana/tmpfs2/SID;/hana/tmpfs3/SID

The preceding example specifies four memory volumes for four NUMA nodes,

which corresponds to the m2-ultramem-208. If you were running on

the m2-ultramem-416, you would need to configure eight memory volumes (0..7).

Restart SAP HANA after modifying the global.ini file.

SAP HANA can now use the tmpfs location as persistent memory space.

Specify the tables to store in persistent memory

Specify specific column tables or partitions to store in persistent memory.

For example, to turn on persistent memory for an existing table, execute the SQL query:

ALTER TABLE exampletable persistent memory ON immediate CASCADE

To change the default for new tables add the parameter

table_default in the indexserver.ini file. For example:

[persistent_memory] table_default = ON

For more information on how to control columns, tables and which monitoring views provide detailed information, see SAP HANA Persistent Memory.

Automated steps

The automation script that Google Cloud provides to enable

SAP HANA Fast Restart

makes changes to directories /hana/tmpfs*, file /etc/fstab, and

SAP HANA configuration. When you run the script, you might need to perform

additional steps depending on whether this is the initial deployment of your

SAP HANA system or you are resizing your machine to a different NUMA size.

For the initial deployment of your SAP HANA system or resizing the machine to increase the number of NUMA nodes, make sure that SAP HANA is running during the execution of automation script that Google Cloud provides to enable SAP HANA Fast Restart.

When you resize your machine to decrease the number of NUMA nodes, make sure that SAP HANA is stopped during the execution of the automation script that Google Cloud provides to enable SAP HANA Fast Restart. After the script is executed, you need to manually update the SAP HANA configuration to complete the SAP HANA Fast Restart setup. For more information, see SAP HANA configuration for Fast Restart.

To enable SAP HANA Fast Restart, follow these steps:

Establish an SSH connection with your host VM.

Switch to root:

sudo su -

Download the

sap_lib_hdbfr.shscript:wget https://storage.googleapis.com/cloudsapdeploy/terraform/latest/terraform/lib/sap_lib_hdbfr.sh

Make the file executable:

chmod +x sap_lib_hdbfr.sh

Verify that the script has no errors:

vi sap_lib_hdbfr.sh ./sap_lib_hdbfr.sh -help

If the command returns an error, contact Cloud Customer Care. For more information about contacting Customer Care, see Getting support for SAP on Google Cloud.

Run the script after replacing SAP HANA system ID (SID) and password for the SYSTEM user of the SAP HANA database. To securely provide the password, we recommend that you use a secret in Secret Manager.

Run the script by using the name of a secret in Secret Manager. This secret must exist in the Google Cloud project that contains your host VM instance.

sudo ./sap_lib_hdbfr.sh -h 'SID' -s SECRET_NAME

Replace the following:

SID: specify the SID with uppercase letters. For example,AHA.SECRET_NAME: specify the name of the secret that corresponds to the password for the SYSTEM user of the SAP HANA database. This secret must exist in the Google Cloud project that contains your host VM instance.

Alternatively, you can run the script using a plain text password. After SAP HANA Fast Restart is enabled, make sure to change your password. Using plain text password is not recommended as your password would be recorded in the command-line history of your VM.

sudo ./sap_lib_hdbfr.sh -h 'SID' -p 'PASSWORD'

Replace the following:

SID: specify the SID with uppercase letters. For example,AHA.PASSWORD: specify the password for the SYSTEM user of the SAP HANA database.

For a successful initial run, you should see an output similar to the following:

INFO - Script is running in standalone mode

ls: cannot access '/hana/tmpfs*': No such file or directory

INFO - Setting up HANA Fast Restart for system 'TST/00'.

INFO - Number of NUMA nodes is 2

INFO - Number of directories /hana/tmpfs* is 0

INFO - HANA version 2.57

INFO - No directories /hana/tmpfs* exist. Assuming initial setup.

INFO - Creating 2 directories /hana/tmpfs* and mounting them

INFO - Adding /hana/tmpfs* entries to /etc/fstab. Copy is in /etc/fstab.20220625_030839

INFO - Updating the HANA configuration.

INFO - Running command: select * from dummy

DUMMY

"X"

1 row selected (overall time 4124 usec; server time 130 usec)

INFO - Running command: ALTER SYSTEM ALTER CONFIGURATION ('global.ini', 'SYSTEM') SET ('persistence', 'basepath_persistent_memory_volumes') = '/hana/tmpfs0/TST;/hana/tmpfs1/TST;'

0 rows affected (overall time 3570 usec; server time 2239 usec)

INFO - Running command: ALTER SYSTEM ALTER CONFIGURATION ('global.ini', 'SYSTEM') SET ('persistent_memory', 'table_unload_action') = 'retain';

0 rows affected (overall time 4308 usec; server time 2441 usec)

INFO - Running command: ALTER SYSTEM ALTER CONFIGURATION ('indexserver.ini', 'SYSTEM') SET ('persistent_memory', 'table_default') = 'ON';

0 rows affected (overall time 3422 usec; server time 2152 usec)

Optional: Configure SSH keys on the primary and secondary compute instances

The SAP HANA secure store (SSFS) keys need to be synchronized between the hosts in the HA cluster. To simplify the synchronization, and to let files like backups to be copied between the compute instances in the HA cluster, these instructions have you create root SSH connections between the two compute instances.

Your organization is likely to have guidelines that govern internal network

communications. If necessary,then after the deployment is complete you can remove

the metadata from the compute instances and the keys from the authorized_keys directory.

If setting up direct SSH connections doesn't comply with your organization's guidelines, then you can synchronize the SSFS keys and transfer files by using other methods such as the following:

- Transfer smaller files through your local workstation by using the Cloud Shell Upload file and Download file menu options. For more information, see Managing files with Cloud Shell.

- Exchange files by using a Cloud Storage bucket. For more information, see the Cloud Storage documentation.

- Use the Backint feature of Google Cloud's Agent for SAP to backup and restore the SAP HANA databases. For more information, see Backint feature of Google Cloud's Agent for SAP.

- Use a file storage solution such as Filestore or Google Cloud NetApp Volumes to create a shared folder. For more information, see File server options.

To enable SSH connections between the primary and secondary instances of your SAP HANA system, complete the following steps:

Establish an SSH connection with the compute instance that hosts the primary instance of your SAP HANA system.

As the root user, generate an SSH key:

#sudo ssh-keygenUpdate the metadata of the primary compute instance with information about the SSH key for the secondary compute instance:

#gcloud compute instances add-metadata secondary-host-name \ --metadata "ssh-keys=$(whoami):$(cat ~/.ssh/id_rsa.pub)" --zone secondary-zoneAuthorize the primary compute instance to itself:

#cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keysEstablish an SSH connection with the compute instance that hosts the secondary instance of your SAP HANA system.

As the root user, generate an SSH key:

#sudo ssh-keygenUpdate the metadata of the secondary compute instance with information about the SSH key for the primary compute instance:

#gcloud compute instances add-metadata primary-host-name \ --metadata "ssh-keys=$(whoami):$(cat ~/.ssh/id_rsa.pub)" --zone primary-zoneAuthorize the secondary compute instance to itself:

#cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keysConfirm that the SSH keys are set up properly by opening an SSH connection from the secondary compute instance to the primary compute instance:

#ssh primary-host-nameOn the primary compute instance, as the root user, confirm the connection by opening an SSH connection to the secondary compute instance:

#ssh secondary-host-name

Back up the databases

Create backups of your databases to initiate database logging for SAP HANA system replication and create a recovery point.

If you have multiple tenant databases in an MDC configuration, then back up each tenant database.

The manual_sap_hana_scaleup_ha.tf

Terraform configuration provided by Google Cloud uses

/hanabackup/data/SID as the default backup directory.

To create backups of new SAP HANA databases, complete the following steps:

On the primary host, switch to the

SID_LCadmuser. Depending on your OS image, the command might be different.sudo -i -u SID_LCadm

Create database backups:

For a SAP HANA single-database-container system:

>hdbsql -t -u system -p SYSTEM_PASSWORD -i INST_NUM \ "backup data using file ('full')"The following example shows a successful response from a new SAP HANA system:

0 rows affected (overall time 18.416058 sec; server time 18.414209 sec)

For a SAP HANA multi-database-container system (MDC), create a backup of the system database as well as any tenant databases:

>hdbsql -t -d SYSTEMDB -u system -p SYSTEM_PASSWORD -i INST_NUM \ "backup data using file ('full')">hdbsql -t -d SID -u system -p SYSTEM_PASSWORD -i INST_NUM \ "backup data using file ('full')"The following example shows a successful response from a new SAP HANA system:

0 rows affected (overall time 16.590498 sec; server time 16.588806 sec)

Confirm that the logging mode is set to normal:

>hdbsql -u system -p SYSTEM_PASSWORD -i INST_NUM \ "select value from "SYS"."M_INIFILE_CONTENTS" where key='log_mode'"The output is similar to the following:

VALUE "normal"

Enable SAP HANA system replication

As a part of enabling SAP HANA system replication, you need to copy the data and key files for the SAP HANA secure stores on the file system (SSFS) from the primary host to the secondary host. The method that this procedure uses to copy the files is just one possible method that you can use.

On the primary host as

SID_LCadm, enable system replication:>hdbnsutil -sr_enable --name=primary-host-nameOn the secondary host as

SID_LCadm, stop SAP HANA:>HDB stopOn the primary host, using the same user account that you used to set up SSH between the host VMs, copy the key files to the secondary host. For convenience, the following commands also define an environment variable for your user account ID:

$sudo cp /usr/sap/SID/SYS/global/security/rsecssfs ~/rsecssfs -r$myid=$(whoami)$sudo chown ${myid} -R /home/"${myid}"/rsecssfs$scp -r rsecssfs $(whoami)@secondary-host-name:rsecssfs$rm -r /home/"${myid}"/rsecssfsOn secondary host, as the same user as the preceding step:

Replace the existing key files in the rsecssfs directories with the files from the primary host and set the file permissions to limit access:

$SAPSID=SID$sudo rm /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/data/SSFS_"${SAPSID}".DAT$sudo rm /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/key/SSFS_"${SAPSID}".KEY$myid=$(whoami)$sudo cp /home/"${myid}"/rsecssfs/data/SSFS_"${SAPSID}".DAT \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/data/SSFS_"${SAPSID}".DAT$sudo cp /home/"${myid}"/rsecssfs/key/SSFS_"${SAPSID}".KEY \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/key/SSFS_"${SAPSID}".KEY$sudo chown "${SAPSID,,}"adm:sapsys \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/data/SSFS_"${SAPSID}".DAT$sudo chown "${SAPSID,,}"adm:sapsys \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/key/SSFS_"${SAPSID}".KEY$sudo chmod 644 \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/data/SSFS_"${SAPSID}".DAT$sudo chmod 640 \ /usr/sap/"${SAPSID}"/SYS/global/security/rsecssfs/key/SSFS_"${SAPSID}".KEYCleanup the files in your home directory.

$rm -r /home/"${myid}"/rsecssfsAs

SID_LCadm, register the secondary SAP HANA system with SAP HANA system replication:>hdbnsutil -sr_register --remoteHost=primary-host-name --remoteInstance=inst_num \ --replicationMode=syncmem --operationMode=logreplay --name=secondary-host-nameAs

SID_LCadm, start SAP HANA:>HDB start

Validating system replication

On the primary host as SID_LCadm, confirm that SAP

HANA system replication is active by running the following python script:

$ python $DIR_INSTANCE/exe/python_support/systemReplicationStatus.pyIf replication is set up properly, among other indicators, the following values

are displayed for the xsengine, nameserver, and indexserver services:

- The

Secondary Active StatusisYES - The

Replication StatusisACTIVE

Also, the overall system replication status shows ACTIVE.

Configure the Cloud Load Balancing failover support

The internal passthrough Network Load Balancer service with failover support routes traffic to the active host in an SAP HANA cluster based on a health check service.

Reserve an IP address for the virtual IP

The virtual IP (VIP) address , which is sometimes referred to as a floating IP address, follows the active SAP HANA system. The load balancer routes traffic that is sent to the VIP to the VM that is currently hosting the active SAP HANA system.

Open Cloud Shell:

Reserve an IP address for the virtual IP. This is the IP address that applications use to access SAP HANA. If you omit the

--addressesflag, an IP address in the specified subnet is chosen for you:$gcloud compute addresses create VIP_NAME \ --region CLUSTER_REGION --subnet CLUSTER_SUBNET \ --addresses VIP_ADDRESSFor more information about reserving a static IP, see Reserving a static internal IP address.

Confirm IP address reservation:

$gcloud compute addresses describe VIP_NAME \ --region CLUSTER_REGIONYou should see output similar to the following example:

address: 10.0.0.19 addressType: INTERNAL creationTimestamp: '2020-05-20T14:19:03.109-07:00' description: '' id: '8961491304398200872' kind: compute#address name: vip-for-hana-ha networkTier: PREMIUM purpose: GCE_ENDPOINT region: https://www.googleapis.com/compute/v1/projects/example-project-123456/regions/us-central1 selfLink: https://www.googleapis.com/compute/v1/projects/example-project-123456/regions/us-central1/addresses/vip-for-hana-ha status: RESERVED subnetwork: https://www.googleapis.com/compute/v1/projects/example-project-123456/regions/us-central1/subnetworks/example-subnet-us-central1

Create instance groups for your host VMs

In Cloud Shell, create two unmanaged instance groups and assign the primary master host VM to one and the secondary master host VM to the other:

$gcloud compute instance-groups unmanaged create PRIMARY_IG_NAME \ --zone=PRIMARY_ZONE$gcloud compute instance-groups unmanaged add-instances PRIMARY_IG_NAME \ --zone=PRIMARY_ZONE \ --instances=PRIMARY_HOST_NAME$gcloud compute instance-groups unmanaged create SECONDARY_IG_NAME \ --zone=SECONDARY_ZONE$gcloud compute instance-groups unmanaged add-instances SECONDARY_IG_NAME \ --zone=SECONDARY_ZONE \ --instances=SECONDARY_HOST_NAMEConfirm the creation of the instance groups:

$gcloud compute instance-groups unmanaged listYou should see output similar to the following example:

NAME ZONE NETWORK NETWORK_PROJECT MANAGED INSTANCES hana-ha-ig-1 us-central1-a example-network example-project-123456 No 1 hana-ha-ig-2 us-central1-c example-network example-project-123456 No 1

Create a Compute Engine health check

In Cloud Shell, create the health check. For the port used by the health check, choose a port that is in the private range, 49152-65535, to avoid clashing with other services. The check-interval and timeout values are slightly longer than the defaults so as to increase failover tolerance during Compute Engine live migration events. You can adjust the values, if necessary:

$gcloud compute health-checks create tcp HEALTH_CHECK_NAME --port=HEALTHCHECK_PORT_NUM \ --proxy-header=NONE --check-interval=10 --timeout=10 --unhealthy-threshold=2 \ --healthy-threshold=2Confirm the creation of the health check:

$gcloud compute health-checks describe HEALTH_CHECK_NAMEYou should see output similar to the following example:

checkIntervalSec: 10 creationTimestamp: '2020-05-20T21:03:06.924-07:00' healthyThreshold: 2 id: '4963070308818371477' kind: compute#healthCheck name: hana-health-check selfLink: https://www.googleapis.com/compute/v1/projects/example-project-123456/global/healthChecks/hana-health-check tcpHealthCheck: port: 60000 portSpecification: USE_FIXED_PORT proxyHeader: NONE timeoutSec: 10 type: TCP unhealthyThreshold: 2

Create a firewall rule for the health checks

Define a firewall rule for a port in the private range that allows access

to your host VMs from the IP ranges that are used by Compute Engine

health checks, 35.191.0.0/16 and 130.211.0.0/22. For more information,

see Creating firewall rules for health checks.

If you don't already have one, add a network tag to your host VMs. This network tag is used by the firewall rule for health checks.

$gcloud compute instances add-tags PRIMARY_HOST_NAME \ --tags NETWORK_TAGS \ --zone PRIMARY_ZONE$gcloud compute instances add-tags SECONDARY_HOST_NAME \ --tags NETWORK_TAGS \ --zone SECONDARY_ZONEIf you don't already have one, create a firewall rule to allow the health checks:

$gcloud compute firewall-rules create RULE_NAME \ --network NETWORK_NAME \ --action ALLOW \ --direction INGRESS \ --source-ranges 35.191.0.0/16,130.211.0.0/22 \ --target-tags NETWORK_TAGS \ --rules tcp:HLTH_CHK_PORT_NUMFor example:

gcloud compute firewall-rules create fw-allow-health-checks \ --network example-network \ --action ALLOW \ --direction INGRESS \ --source-ranges 35.191.0.0/16,130.211.0.0/22 \ --target-tags cluster-ntwk-tag \ --rules tcp:60000

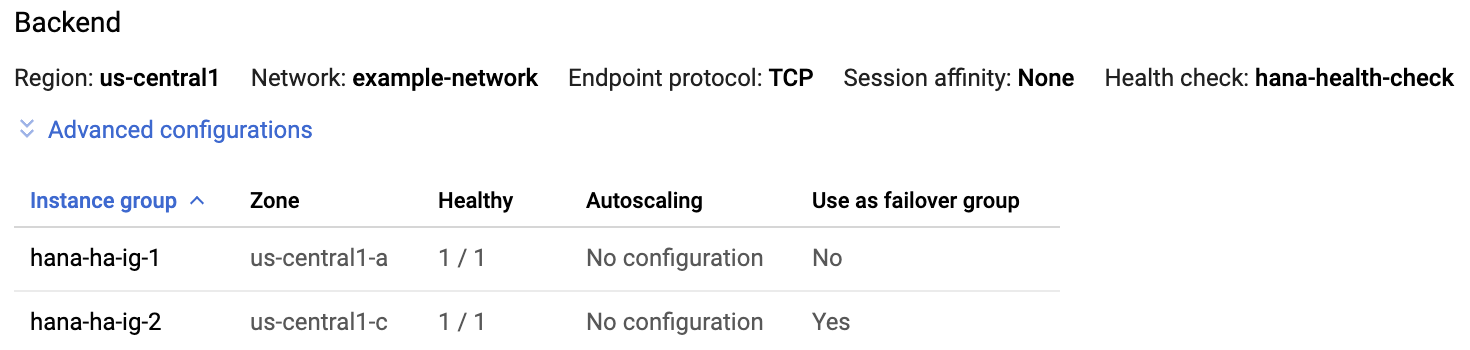

Configure the load balancer and failover group

Create the load balancer backend service:

$gcloud compute backend-services create BACKEND_SERVICE_NAME \ --load-balancing-scheme internal \ --health-checks HEALTH_CHECK_NAME \ --no-connection-drain-on-failover \ --drop-traffic-if-unhealthy \ --failover-ratio 1.0 \ --region CLUSTER_REGION \ --global-health-checksAdd the primary instance group to the backend service:

$gcloud compute backend-services add-backend BACKEND_SERVICE_NAME \ --instance-group PRIMARY_IG_NAME \ --instance-group-zone PRIMARY_ZONE \ --region CLUSTER_REGIONAdd the secondary, failover instance group to the backend service:

$gcloud compute backend-services add-backend BACKEND_SERVICE_NAME \ --instance-group SECONDARY_IG_NAME \ --instance-group-zone SECONDARY_ZONE \ --failover \ --region CLUSTER_REGIONCreate a forwarding rule. For the IP address, specify the IP address that you reserved for the VIP. If you need to access the SAP HANA system from outside of the region that is specified below, include the flag

--allow-global-accessin the definition:$gcloud compute forwarding-rules create RULE_NAME \ --load-balancing-scheme internal \ --address VIP_ADDRESS \ --subnet CLUSTER_SUBNET \ --region CLUSTER_REGION \ --backend-service BACKEND_SERVICE_NAME \ --ports ALLFor more information about cross-region access to your SAP HANA high-availability system, see Internal TCP/UDP Load Balancing.

Test the load balancer configuration

Even though your backend instance groups won't register as healthy until later, you can test the load balancer configuration by setting up a listener to respond to the health checks. After setting up a listener, if the load balancer is configured correctly, the status of the backend instance groups changes to healthy.

The following sections present different methods that you can use to test the configuration.

Testing the load balancer with the socat utility

You can use the socat utility to temporarily listen on the health check

port.

On both host VMs, install the

socatutility:$sudo yum install -y socatStart a

socatprocess to listen for 60 seconds on the health check port:$sudo timeout 60s socat - TCP-LISTEN:HLTH_CHK_PORT_NUM,forkIn Cloud Shell, after waiting a few seconds for the health check to detect the listener, check the health of your backend instance groups:

$gcloud compute backend-services get-health BACKEND_SERVICE_NAME \ --region CLUSTER_REGIONYou should see output similar to the following:

--- backend: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-a/instanceGroups/hana-ha-ig-1 status: healthStatus: ‐ healthState: HEALTHY instance: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-a/instances/hana-ha-vm-1 ipAddress: 10.0.0.35 port: 80 kind: compute#backendServiceGroupHealth --- backend: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-c/instanceGroups/hana-ha-ig-2 status: healthStatus: ‐ healthState: HEALTHY instance: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-c/instances/hana-ha-vm-2 ipAddress: 10.0.0.34 port: 80 kind: compute#backendServiceGroupHealth

Testing the load balancer using port 22

If port 22 is open for SSH connections on your host VMs, you can temporarily edit the health checker to use port 22, which has a listener that can respond to the health checker.

To temporarily use port 22, follow these steps:

Click your health check in the console:

Click Edit.

In the Port field, change the port number to 22.

Click Save and wait a minute or two.

In Cloud Shell, check the health of your backend instance groups:

$gcloud compute backend-services get-health BACKEND_SERVICE_NAME \ --region CLUSTER_REGIONYou should see output similar to the following:

--- backend: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-a/instanceGroups/hana-ha-ig-1 status: healthStatus: ‐ healthState: HEALTHY instance: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-a/instances/hana-ha-vm-1 ipAddress: 10.0.0.35 port: 80 kind: compute#backendServiceGroupHealth --- backend: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-c/instanceGroups/hana-ha-ig-2 status: healthStatus: ‐ healthState: HEALTHY instance: https://www.googleapis.com/compute/v1/projects/example-project-123456/zones/us-central1-c/instances/hana-ha-vm-2 ipAddress: 10.0.0.34 port: 80 kind: compute#backendServiceGroupHealth

When you are done, change the health check port number back to the original port number.

Install HA packages

If you want to set up SBD-based fencing instead of using the

fence_gcefence agent, then install the required packages on the primary and secondary hosts:sudo yum install iscsi-initiator-utils targetcli fence-agents-sbd

Set up Pacemaker

The following procedure configures the RHEL implementation of a Pacemaker cluster on Compute Engine instances for SAP HANA.

The procedure is based on Red Hat documentation for configuring high-availability clusters, including (a Red Hat subscription is required):

- Installing and Configuring a Red Hat Enterprise Linux 7.6 (and later) High-Availability Cluster on Google Compute Cloud

- Automated SAP HANA System Replication in Scale-Up in pacemaker cluster

Install the cluster agents on both nodes

Complete the following steps on both nodes.

As root, install the Pacemaker components:

#yum -y install pcs pacemaker fence-agents-gce resource-agents-gcp resource-agents-sap-hana#yum update -yIf you are using a Google-provided RHEL-for-SAP image, these packages are already installed, but some updates might be required.

Set the password for the

haclusteruser, which is installed as part of the packages:#passwd haclusterSpecify a password for

haclusterat the prompts.In the RHEL images provided by Google Cloud, the OS firewall service is active by default. Configure the firewall service to allow high-availability traffic:

#firewall-cmd --permanent --add-service=high-availability#firewall-cmd --reloadStart the pcs service and configure it to start at boot time:

#systemctl start pcsd.service#systemctl enable pcsd.serviceCheck the status of the pcs service:

#systemctl status pcsd.serviceThe output is similar to the following:

● pcsd.service - PCS GUI and remote configuration interface Loaded: loaded (/usr/lib/systemd/system/pcsd.service; enabled; vendor preset: disabled) Active: active (running) since Sat 2020-06-13 21:17:05 UTC; 25s ago Docs: man:pcsd(8) man:pcs(8) Main PID: 31627 (pcsd) CGroup: /system.slice/pcsd.service └─31627 /usr/bin/ruby /usr/lib/pcsd/pcsd Jun 13 21:17:03 example-ha-vm1 systemd[1]: Starting PCS GUI and remote configuration interface... Jun 13 21:17:05 example-ha-vm1 systemd[1]: Started PCS GUI and remote configuration interface.In the

/etc/hostsfile, add the full hostname and the internal IP addresses of both hosts in the cluster. For example:127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 10.0.0.40 example-ha-vm1.us-central1-a.c.example-project-123456.internal example-ha-vm1 # Added by Google 10.0.0.41 example-ha-vm2.us-central1-c.c.example-project-123456.internal example-ha-vm2 169.254.169.254 metadata.google.internal # Added by Google

For more information from Red Hat about setting up the

/etc/hostsfile on RHEL cluster nodes, see https://access.redhat.com/solutions/81123.

Create the SBD LUN and iSCSI targets

If you're using

SBD-based fencing,

then you must create SBD LUN and iSCSI targets. If you're using fence_gce

based fencing, then you can skip this section.

To create the SBD LUN and iSCSI targets, complete the following steps:

On all nodes of your HA cluster, enable and start the

targetservice by running following commands:systemctl enable target systemctl start targetOn all the nodes of your HA cluster, create the SBD storage devices by running the following commands. This configures all the compute instances in the cluster to act as an iSCSI target server.

#mkdir -p /iscsi_target/#targetcli backstores/fileio create SBD_NODE_NAME /iscsi_target/"HOSTNAME" 100M write_back=false#targetcli iscsi/ create iqn.2006-04.HOSTNAME-sbdtarget.local:HOSTNAME-sbdtarget#targetcli iscsi/iqn.2006-04.HOSTNAME-sbdtarget.local:HOSTNAME-sbdtarget/tpg1/luns/ create /backstores/fileio/"SBD_NODE_NAME"#targetcli iscsi/iqn.2006-04.HOSTNAME-sbdtarget.local:HOSTNAME-sbdtarget/tpg1/acls/ create iqn.2006-04.PRIMARY_INSTANCE_NAME-sbdinitiator.local:PRIMARY_INSTANCE_NAME-sbdinitiator#targetcli iscsi/iqn.2006-04.HOSTNAME-sbdtarget.local:HOSTNAME-sbdtarget/tpg1/acls/ create iqn.2006-04.SECONDARY_INSTANCE_NAME-sbdinitiator.local:SECONDARY_INSTANCE_NAME-sbdinitiator#targetcli saveconfigReplace the following:

SBD_NODE_NAME: the SBD identifier that you assign to the node in your HA clusterHOSTNAME: the name of the compute instance that hosts the nodePRIMARY_INSTANCE_NAME: the name of the compute instance that hosts the primary node in your HA clusterSECONDARY_INSTANCE_NAME: the name of the compute instance that hosts the secondary node in your HA cluster

The output is similar on all compute instances in your HA cluster. The following is an example output from the primary node of the cluster:

[root@example-ha-vm1 ~]# targetcli ls o- / ......................................................................................................................... [...] o- backstores .............................................................................................................. [...] | o- block .................................................................................................. [Storage Objects: 0] | o- fileio ................................................................................................. [Storage Objects: 1] | | o- sbd-node1 .................................................... [/iscsi_target/example-ha-vm1 (100.0MiB) write-thru activated] | | o- alua ................................................................................................... [ALUA Groups: 1] | | o- default_tg_pt_gp ....................................................................... [ALUA state: Active/optimized] | o- pscsi .................................................................................................. [Storage Objects: 0] | o- ramdisk ................................................................................................ [Storage Objects: 0] o- iscsi ............................................................................................................ [Targets: 1] | o- iqn.2006-04.example-ha-vm1-sbdtarget.local:example-ha-vm1-sbdtarget ................................................... [TPGs: 1] | o- tpg1 ............................................................................................... [no-gen-acls, no-auth] | o- acls .......................................................................................................... [ACLs: 2] | | o- iqn.2006-04.example-ha-vm1-sbdinitiator.local:example-ha-vm1-sbdinitiator ................................ [Mapped LUNs: 1] | | | o- mapped_lun0 ............................................................................ [lun0 fileio/sbd-node1 (rw)] | | o- iqn.2006-04.example-ha-vm2-sbdinitiator.local:example-ha-vm2-sbdinitiator ................................ [Mapped LUNs: 1] | | o- mapped_lun0 ............................................................................ [lun0 fileio/sbd-node1 (rw)] | o- luns .......................................................................................................... [LUNs: 1] | | o- lun0 ............................................... [fileio/sbd-node1 (/iscsi_target/example-ha-vm1) (default_tg_pt_gp)] | o- portals .................................................................................................... [Portals: 1] | o- 0.0.0.0:3260 ..................................................................................................... [OK] o- loopback ......................................................................................................... [Targets: 0] [root@example-ha-vm1 ~]#

On the primary and secondary nodes of your HA cluster, configure the compute instances to act as iSCSI initiators by running the following commands:

systemctl enable sbd sudo systemctl enable --now iscsid iscsi sed -i "s/^InitiatorName=.*/InitiatorName=iqn.2006-04.HOSTNAME-sbdinitiator.local:HOSTNAME-sbdinitiator/" /etc/iscsi/initiatorname.iscsi systemctl restart iscsid iscsi

On the primary and secondary nodes of your HA cluster, load and enable the

softdogkernel module by running the following commands:modprobe softdog echo "softdog" | sudo tee /etc/modules-load.d/softdog.confOn the primary and secondary nodes of your HA cluster, run the following commands to discover and sign in to the iSCSI targets exposed by all three nodes of your HA cluster. This makes the SBD devices available as local block devices:

Discover all SBD targets:

sudo iscsiadm -m discovery --type=st --portal=PRIMARY_INSTANCE_NAME sudo iscsiadm -m discovery --type=st --portal=SECONDARY_INSTANCE_NAME sudo iscsiadm -m discovery --type=st --portal=ISCSI_TARGET_INSTANCE_NAME

Sign into all SBD targets:

sudo iscsiadm -m node -T iqn.2006-04.PRIMARY_INSTANCE_NAME-sbdtarget.local:PRIMARY_INSTANCE_NAME-sbdtarget --portal=PRIMARY_INSTANCE_NAME --login sudo iscsiadm -m node -T iqn.2006-04.SECONDARY_INSTANCE_NAME-sbdtarget.local:SECONDARY_INSTANCE_NAME-sbdtarget --portal=SECONDARY_INSTANCE_NAME --login sudo iscsiadm -m node -T iqn.2006-04.ISCSI_TARGET_INSTANCE_NAME-sbdtarget.local:ISCSI_TARGET_INSTANCE_NAME-sbdtarget --portal=ISCSI_TARGET_INSTANCE_NAME --login

Enable the iSCSI initiators to automatically connect to the shared SBD storage targets whenever the compute instance boots:

sudo iscsiadm -m node -T iqn.2006-04.PRIMARY_INSTANCE_NAME-sbdtarget.local:PRIMARY_INSTANCE_NAME-sbdtarget -o update -n node.startup -v automatic sudo iscsiadm -m node -T iqn.2006-04.SECONDARY_INSTANCE_NAME-sbdtarget.local:SECONDARY_INSTANCE_NAME-sbdtarget -o update -n node.startup -v automatic sudo iscsiadm -m node -T iqn.2006-04.ISCSI_TARGET_INSTANCE_NAME-sbdtarget.local:ISCSI_TARGET_INSTANCE_NAME-sbdtarget -o update -n node.startup -v automatic

On the primary node of your HA cluster, retrieve the device ID by running the following command for each SBD node:

sbd_device_volume=$(lsscsi | grep " SBD_NODE_NAME" | awk '{print $NF}') ls -l /dev/disk/by-id/scsi-3* | grep `basename $sbd_device_volume` | awk '{print $9}'

Replace SBD_NODE_NAME with the SBD identifier that you assigned to the node in your HA cluster. The following is an example SBD device ID:

/dev/disk/by-id/scsi-36001405a043b0d94eff4a5da93e2bd09.On the primary and secondary nodes of your HA cluster, initialize the SBD devices and configure the SBD daemon by running the following commands:

#SBD_DEVICE_LIST="SBD_DEVICE_1_ID;SBD_DEVICE_2_ID;SBD_DEVICE_3_ID"#sed -i "s|^#SBD_DEVICE=.*|SBD_DEVICE=\"${SBD_DEVICE_LIST}\"|" /etc/sysconfig/sbd#sed -i "s|^SBD_WATCHDOG_DEV=.*|SBD_WATCHDOG_DEV=/dev/watchdog|" /etc/sysconfig/sbd#sed -i "s|^SBD_DELAY_START=.*|SBD_DELAY_START=160|" /etc/sysconfig/sbdThe following is an example of how the SBD device list looks:

example-ha-vm1:~ # cat /etc/sysconfig/sbd | grep -i SBD_ # SBD_DEVICE specifies the devices to use for exchanging sbd messages SBD_DEVICE="/dev/disk/by-id/scsi-36001405a043b0d94eff4a5da93e2bd09;/dev/disk/by-id/scsi-3600140553a6eafdeb114cb5871021792;/dev/disk/by-id/scsi-360014059ed78a807bca4a9f8bf39c390" SBD_PACEMAKER=yes SBD_STARTMODE=always SBD_DELAY_START=160 SBD_WATCHDOG_DEV=/dev/watchdog SBD_WATCHDOG_TIMEOUT=5 SBD_TIMEOUT_ACTION=flush,reboot SBD_MOVE_TO_ROOT_CGROUP=auto SBD_SYNC_RESOURCE_STARTUP=yes SBD_OPTS=

From either node of the cluster, create the SBD header for all iSCSI devices:

SBD_TIMEOUT_SECS=60 SBD_MSGWAIT_SECS=120 sbd -d "${device_id}" -1 "${SBD_TIMEOUT_SECS}" -4 "${SBD_MSGWAIT_SECS}" createOn the primary and secondary nodes of the cluster, edit the

/etc/systemd/system/sbd.service.d/override.confto accommodateSBD_DELAY_START:mkdir -p /etc/systemd/system/sbd.service.d/ echo -e "[Service]\nTimeoutStartSec=180s" | sudo tee /etc/systemd/system/sbd.service.d/override.confOn the primary and secondary nodes of the cluster, edit the

/etc/systemd/system/corosync.service.d/override.confto delay fencing:mkdir -p /etc/systemd/system/corosync.service.d/ echo -e '[Service]\nExecStartPre=/bin/sleep 120\nTimeoutStartSec=140' | tee -a /etc/systemd/system/corosync.service.d/override.conf

Create the cluster

As root on either node, authorize the

haclusteruser. Click the tab for your RHEL version to see the command:RHEL 8 and later

#pcs host auth primary-host-name secondary-host-nameRHEL 7

#pcs cluster auth primary-host-name secondary-host-nameAt the prompts, enter the

haclusterusername and the password that you set for thehaclusteruser.Create the cluster:

RHEL 8 and later

#pcs cluster setup cluster-name primary-host-name secondary-host-nameRHEL 7

#pcs cluster setup --name cluster-name primary-host-name secondary-host-name

Edit the corosync.conf default settings

Edit the /etc/corosync/corosync.conf file on the primary host to set a more

appropriate starting point for testing the fault tolerance of your HA cluster on

Google Cloud.

On either host, edit the

/etc/corosync/corosync.conffile by using your preferred text editor. For example:vi /etc/corosync/corosync.conf

If the

/etc/corosync/corosync.conffile is a new file or an empty file, then you can check the/etc/corosyncdirectory for an example file to use as the base for thecorosync.conffile.In the

totemsection of thecorosync.conffile, add the following properties with the given values:RHEL 8 and later

transport: knettoken: 20000token_retransmits_before_loss_const: 10join: 60max_messages: 20

For example:

totem { version: 2 cluster_name: hacluster secauth: off transport: knet token: 20000 token_retransmits_before_loss_const: 10 join: 60 max_messages: 20 } ...RHEL 7

transport: udputoken: 20000token_retransmits_before_loss_const: 10join: 60max_messages: 20

For example:

totem { version: 2 cluster_name: hacluster secauth: off transport: udpu token: 20000 token_retransmits_before_loss_const: 10 join: 60 max_messages: 20 } ...From the compute instance that contains the edited

corosync.conffile, sync the Corosync configuration across the HA cluster:RHEL 8 and later

#pcs cluster sync corosyncRHEL 7

#pcs cluster syncConfigure the cluster to start automatically:

pcs cluster enable --all pcs cluster start --allConfirm that the new Corosync settings are active in the cluster:

corosync-cmapctl

Set up fencing

RHEL images that are provided by Google Cloud include a fence_gce

fencing agent that is specific to Google Cloud. You use fence_gce to

create fence devices for each host VM.

To ensure that the correct sequence of events occur after a fencing action, you also configure the operating system to delay the restart of Corosync after a compute instance is fenced. You also adjust the Pacemaker timeout for reboots to account for this delay.

You can create the fencing devices by using any one of the following options:

- Use the

fence_gcefencing agent, which is provided by Google Cloud. - Use SBD-based fencing.

Create the fencing device resources

For fence_gce based fencing

To create the fencing device resources when you've opted to set up

fence_gce based fencing, complete the following steps:

Connect to the compute instance in the primary site of your HA cluster by using SSH or your preferred method.

As the root user, create a fencing device for the primary host:

#pcs stonith create primary-fence-name fence_gce \ port=primary-host-name \ zone=primary-host-zone \ project=project-id \ pcmk_reboot_timeout=300 pcmk_monitor_retries=4 pcmk_delay_max=30 \ op monitor interval="300s" timeout="120s" \ op start interval="0" timeout="60s"As the root user, create a fencing device for the secondary host:

#pcs stonith create secondary-fence-name fence_gce \ port=secondary-host-name \ zone=secondary-host-zone \ project=project-id \ pcmk_reboot_timeout=300 pcmk_monitor_retries=4 \ op monitor interval="300s" timeout="120s" \ op start interval="0" timeout="60s"Constrain each fencing device to the other host VM:

#pcs constraint location primary-fence-name avoids primary-host-name#pcs constraint location secondary-fence-name avoids secondary-host-nameTest the secondary fencing device:

Shut down the secondary host VM:

#fence_gce -o off -n secondary-host-name --zone=secondary-host-zoneIf the command is successful, then you lose connectivity to the secondary host VM and it appears stopped on the VM instances page in the Google Cloud console. You might need to refresh the page.

Restart the secondary host VM:

#fence_gce -o on -n secondary-host-name --zone=secondary-host-zone